Currently, I have been involved in a Dell EMC VxRail design & deployment with VMware Cloud Foundation on Dell EMC VxRail. There were some noticeable items that you need to consider when using the Dell EMC VxRail as your hardware layer in combination with VMware NSX-T as a network overlay. So it was time to write down the items that I have learned so far surrounding the VxRail NSX-T considerations.

This blog post is focused on the NSX design considerations that are related to the physical level when using the Dell EMC VxRail hardware.

At first, I am going to talk about VMware NSX-V because a lot of customers are already running Dell EMC VxRail in combination with NSX-V and need to move to NSX-T in some time.

VMware NSX-V

In case you are already using Dell EMC VxRail with VMware NSX-V. Your physical NIC configuration would in most cases look like one of the following:

- Scenario 01: Dual port physical NIC – 10 Gbit

- Scenario 02: Dual port physical NIC – 25 Gbit

The default configuration that I see in the field at this moment is based on a single dual-port card with either 10 Gbit or 25 Gbit. This is for fine for VMware NSX-V but not for his replacement…

VMware NSX-T

When using Dell EMC VxRail with VMware NSX-T you are required to use four physical NICs! This is because of the limitation surrounding the Dell EMC VxRail software that makes a “PowerEdge server” a “VxRail server”.

The first official Dell EMC statement from there VMware Cloud Foundation on VxRail Architecture Guide: “NSX-T based VI WLD will require additional uplinks, whatever uplinks were used to deploy the VxRail vDS cannot be used or the NSX-T N-VDS“.

The second official Dell EMC statement from there VMware Cloud Foundation on VxRail Architecture Guide: “Note: NSX-T will use the next two available vmnics that are both the same speed for every node in the cluster“.

So this leaves us with three scenarios provided by Dell EMC for the VxRail nodes:

- Scenario 01: Quad-port physical NIC

- Scenario 02: Quad-port physical NIC (two ports used) with dual-port physical NIC

- Scenario 03: Dual-port physical NIC with dual-port physical NIC.

Advise

Dell EMC VxRail is the only hardware platform currently on the market that requires four physical NICs to operate with NSX-T. This means you have to make sure your hardware and datacenter are capable of supporting this requirement. You need to make some choices surrounding the physical network cards, network capacity and datacenter rack space.

So let’s start with my list of VxRail NSX-T considerations!

Physical Network Card

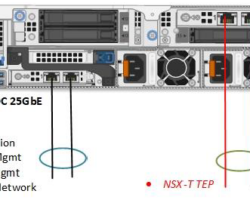

When you are at a point of buying the Dell EMC VxRail solution, buy at least a quad-port NIC configuration. Personally, I prefer the double dual-port NIC setup. As shown here below:

I prefer this hardware setup because of the hardware redundancy created by two cards with there separate chips and PCIe slots. This reduces the change of losing all your network connections when a physical NIC dies.

Another recommendation should be to buy physical NICs that support 25 Gbit. It is a minimum price difference and will make the setup more future proof.

Top of Rack (TOR)

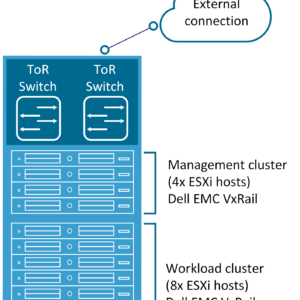

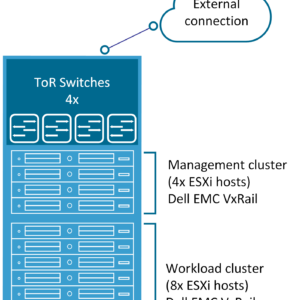

As discussed in the last paragraph: when you move to VMware NSX-T you are forced to use four physical NICs in each VxRail node. After installing the card you need to make sure you have enough physical ports in your Top of Rack switches/Leaf switches.

At the customer where I am currently working, they are forced to increase there Top of Rack switches capacity from two ports per server with NSX-V to four ports per server with NSX-T. This meant a full redesign of there datacenter rack topology and network topology. The spine switches were also not able to connect with that amount of leaf switches.

Keep in mind: This is only required of course when you are running a decent amount of servers per rack. In the customer case, they are running 32 VxRail nodes per rack. This means they require at least 128 physical switch ports per rack without uplink ports counted.

Here is an overview of the scenarios as just described, the first is the NSX-V scenario and the second the NSX-T scenario.

Near future

I know that VMware & Dell EMC are currently working on a solution for the VxRail hardware but time will tell. At this point keep your eyes open when moving from NSX-V to NSX-T with Dell EMC VxRail. Customers how are deploying greenfield also need to be aware that they need additional network capacity.

So that wraps up my VxRail NSX-T Considerations blog post. Thanks for reading my blog post and see you next time!

Thanks for writing the article! Validate point to look at when using VxRail in your environment.

Nice article!

I would like to suggest to create a EtherChannel using one link of each NIC card instead of a bundle on one NIC. This will increase the redundancy and makes sure that they’re is full connectivity even when one NIC card fails.

Jitse thanks for responding and adding this information.

You are making a valid point, that would be the best setup to configure those NICs. The only problem is that VxRail doesn’t allow this configuration but I am not 100% sure…

Maybe is somebody able to respond to verify this?

you are right Mischa. VxRail do not support EtherChannel on its NICs.

And thanks for writing the article.

i like your approach for selecting NICs.

Preetam, thanks for clarifying.

Much appreciated!

Hello guys, You Can make port-Channel only in productions ports, not for private mngmt,mngmt or vsan.